mirror of

https://mau.dev/maunium/synapse.git

synced 2024-10-01 01:36:05 -04:00

Revert accidental fast-forward merge from v1.49.0rc1

Revert "Sort internal changes in changelog" Revert "Update CHANGES.md" Revert "1.49.0rc1" Revert "Revert "Move `glob_to_regex` and `re_word_boundary` to `matrix-python-common` (#11505) (#11527)" Revert "Refactors in `_generate_sync_entry_for_rooms` (#11515)" Revert "Correctly register shutdown handler for presence workers (#11518)" Revert "Fix `ModuleApi.looping_background_call` for non-async functions (#11524)" Revert "Fix 'delete room' admin api to work on incomplete rooms (#11523)" Revert "Correctly ignore invites from ignored users (#11511)" Revert "Fix the test breakage introduced by #11435 as a result of concurrent PRs (#11522)" Revert "Stabilise support for MSC2918 refresh tokens as they have now been merged into the Matrix specification. (#11435)" Revert "Save the OIDC session ID (sid) with the device on login (#11482)" Revert "Add admin API to get some information about federation status (#11407)" Revert "Include bundled aggregations in /sync and related fixes (#11478)" Revert "Move `glob_to_regex` and `re_word_boundary` to `matrix-python-common` (#11505)" Revert "Update backward extremity docs to make it clear that it does not indicate whether we have fetched an events' `prev_events` (#11469)" Revert "Support configuring the lifetime of non-refreshable access tokens separately to refreshable access tokens. (#11445)" Revert "Add type hints to `synapse/tests/rest/admin` (#11501)" Revert "Revert accidental commits to develop." Revert "Newsfile" Revert "Give `tests.server.setup_test_homeserver` (nominally!) the same behaviour" Revert "Move `tests.utils.setup_test_homeserver` to `tests.server`" Revert "Convert one of the `setup_test_homeserver`s to `make_test_homeserver_synchronous`" Revert "Disambiguate queries on `state_key` (#11497)" Revert "Comments on the /sync tentacles (#11494)" Revert "Clean up tests.storage.test_appservice (#11492)" Revert "Clean up `tests.storage.test_main` to remove use of legacy code. (#11493)" Revert "Clean up `tests.test_visibility` to remove legacy code. (#11495)" Revert "Minor cleanup on recently ported doc pages (#11466)" Revert "Add most of the missing type hints to `synapse.federation`. (#11483)" Revert "Avoid waiting for zombie processes in `synctl stop` (#11490)" Revert "Fix media repository failing when media store path contains symlinks (#11446)" Revert "Add type annotations to `tests.storage.test_appservice`. (#11488)" Revert "`scripts-dev/sign_json`: support for signing events (#11486)" Revert "Add MSC3030 experimental client and federation API endpoints to get the closest event to a given timestamp (#9445)" Revert "Port wiki pages to documentation website (#11402)" Revert "Add a license header and comment. (#11479)" Revert "Clean-up get_version_string (#11468)" Revert "Link background update controller docs to summary (#11475)" Revert "Additional type hints for config module. (#11465)" Revert "Register the login redirect endpoint for v3. (#11451)" Revert "Update openid.md" Revert "Remove mention of OIDC certification from Dex (#11470)" Revert "Add a note about huge pages to our Postgres doc (#11467)" Revert "Don't start Synapse master process if `worker_app` is set (#11416)" Revert "Expose worker & homeserver as entrypoints in `setup.py` (#11449)" Revert "Bundle relations of relations into the `/relations` result. (#11284)" Revert "Fix `LruCache` corruption bug with a `size_callback` that can return 0 (#11454)" Revert "Eliminate a few `Any`s in `LruCache` type hints (#11453)" Revert "Remove unnecessary `json.dumps` from `tests.rest.admin` (#11461)" Revert "Merge branch 'master' into develop" This reverts commit26b5d2320f. This reverts commitbce4220f38. This reverts commit966b5d0fa0. This reverts commit088d748f2c. This reverts commit14d593f72d. This reverts commit2a3ec6facf. This reverts commiteccc49d755. This reverts commitb1ecd19c5d. This reverts commit9c55dedc8c. This reverts commit2d42e586a8. This reverts commit2f053f3f82. This reverts commita15a893df8. This reverts commit8b4b153c9e. This reverts commit494ebd7347. This reverts commita77c369897. This reverts commit4eb77965cd. This reverts commit637df95de6. This reverts commite5f426cd54. This reverts commit8cd68b8102. This reverts commit6cae125e20. This reverts commit7be88fbf48. This reverts commitb3fd99b74a. This reverts commitf7ec6e7d9e. This reverts commit5640992d17. This reverts commitd26808dd85. This reverts commitf91624a595. This reverts commit16d39a5490. This reverts commit8a4c296987. This reverts commit49e1356ee3. This reverts commitd2279f471b. This reverts commitb50e39df57. This reverts commit858d80bf0f. This reverts commit435f044807. This reverts commitf61462e1be. This reverts commita6f1a3abec. This reverts commit84dc50e160. This reverts commited635d3285. This reverts commit7b62791e00. This reverts commit153194c771. This reverts commitf44d729d4c. This reverts commita265fbd397. This reverts commitb9fef1a7cd. This reverts commitb0eb64ff7b. This reverts commitf1795463bf. This reverts commit70cbb1a5e3. This reverts commit42bf020463. This reverts commit379f2650cf. This reverts commit7ff22d6da4. This reverts commit5a0b652d36. This reverts commit432a174bc1. This reverts commitb14f8a1baf, reversing changes made toe713855dca.

This commit is contained in:

parent

26b5d2320f

commit

158d73ebdd

2

.github/workflows/tests.yml

vendored

2

.github/workflows/tests.yml

vendored

@ -374,7 +374,7 @@ jobs:

|

||||

working-directory: complement/dockerfiles

|

||||

|

||||

# Run Complement

|

||||

- run: go test -v -tags synapse_blacklist,msc2403 ./tests/...

|

||||

- run: go test -v -tags synapse_blacklist,msc2403,msc2946,msc3083 ./tests/...

|

||||

env:

|

||||

COMPLEMENT_BASE_IMAGE: complement-synapse:latest

|

||||

working-directory: complement

|

||||

|

||||

93

CHANGES.md

93

CHANGES.md

@ -1,96 +1,3 @@

|

||||

Synapse 1.49.0rc1 (2021-12-07)

|

||||

==============================

|

||||

|

||||

We've decided to move the existing, somewhat stagnant pages from the GitHub wiki

|

||||

to the [documentation website](https://matrix-org.github.io/synapse/latest/).

|

||||

|

||||

This was done for two reasons. The first was to ensure that changes are checked by

|

||||

multiple authors before being committed (everyone makes mistakes!) and the second

|

||||

was visibility of the documentation. Not everyone knows that Synapse has some very

|

||||

useful information hidden away in its GitHub wiki pages. Bringing them to the

|

||||

documentation website should help with visibility, as well as keep all Synapse documentation

|

||||

in one, easily-searchable location.

|

||||

|

||||

Note that contributions to the documentation website happen through [GitHub pull

|

||||

requests](https://github.com/matrix-org/synapse/pulls). Please visit [#synapse-dev:matrix.org](https://matrix.to/#/#synapse-dev:matrix.org)

|

||||

if you need help with the process!

|

||||

|

||||

|

||||

Features

|

||||

--------

|

||||

|

||||

- Add [MSC3030](https://github.com/matrix-org/matrix-doc/pull/3030) experimental client and federation API endpoints to get the closest event to a given timestamp. ([\#9445](https://github.com/matrix-org/synapse/issues/9445))

|

||||

- Include bundled relation aggregations during a limited `/sync` request and `/relations` request, per [MSC2675](https://github.com/matrix-org/matrix-doc/pull/2675). ([\#11284](https://github.com/matrix-org/synapse/issues/11284), [\#11478](https://github.com/matrix-org/synapse/issues/11478))

|

||||

- Add plugin support for controlling database background updates. ([\#11306](https://github.com/matrix-org/synapse/issues/11306), [\#11475](https://github.com/matrix-org/synapse/issues/11475), [\#11479](https://github.com/matrix-org/synapse/issues/11479))

|

||||

- Support the stable API endpoints for [MSC2946](https://github.com/matrix-org/matrix-doc/pull/2946): the room `/hierarchy` endpoint. ([\#11329](https://github.com/matrix-org/synapse/issues/11329))

|

||||

- Add admin API to get some information about federation status with remote servers. ([\#11407](https://github.com/matrix-org/synapse/issues/11407))

|

||||

- Support expiry of refresh tokens and expiry of the overall session when refresh tokens are in use. ([\#11425](https://github.com/matrix-org/synapse/issues/11425))

|

||||

- Stabilise support for [MSC2918](https://github.com/matrix-org/matrix-doc/blob/main/proposals/2918-refreshtokens.md#msc2918-refresh-tokens) refresh tokens as they have now been merged into the Matrix specification. ([\#11435](https://github.com/matrix-org/synapse/issues/11435), [\#11522](https://github.com/matrix-org/synapse/issues/11522))

|

||||

- Update [MSC2918 refresh token](https://github.com/matrix-org/matrix-doc/blob/main/proposals/2918-refreshtokens.md#msc2918-refresh-tokens) support to confirm with the latest revision: accept the `refresh_tokens` parameter in the request body rather than in the URL parameters. ([\#11430](https://github.com/matrix-org/synapse/issues/11430))

|

||||

- Support configuring the lifetime of non-refreshable access tokens separately to refreshable access tokens. ([\#11445](https://github.com/matrix-org/synapse/issues/11445))

|

||||

- Expose `synapse_homeserver` and `synapse_worker` commands as entry points to run Synapse's main process and worker processes, respectively. Contributed by @Ma27. ([\#11449](https://github.com/matrix-org/synapse/issues/11449))

|

||||

- `synctl stop` will now wait for Synapse to exit before returning. ([\#11459](https://github.com/matrix-org/synapse/issues/11459), [\#11490](https://github.com/matrix-org/synapse/issues/11490))

|

||||

- Extend the "delete room" admin api to work correctly on rooms which have previously been partially deleted. ([\#11523](https://github.com/matrix-org/synapse/issues/11523))

|

||||

- Add support for the `/_matrix/client/v3/login/sso/redirect/{idpId}` API from Matrix v1.1. This endpoint was overlooked when support for v3 endpoints was added in Synapse 1.48.0rc1. ([\#11451](https://github.com/matrix-org/synapse/issues/11451))

|

||||

|

||||

|

||||

Bugfixes

|

||||

--------

|

||||

|

||||

- Fix using [MSC2716](https://github.com/matrix-org/matrix-doc/pull/2716) batch sending in combination with event persistence workers. Contributed by @tulir at Beeper. ([\#11220](https://github.com/matrix-org/synapse/issues/11220))

|

||||

- Fix a long-standing bug where all requests that read events from the database could get stuck as a result of losing the database connection, properly this time. Also fix a race condition introduced in the previous insufficient fix in Synapse 1.47.0. ([\#11376](https://github.com/matrix-org/synapse/issues/11376))

|

||||

- The `/send_join` response now includes the stable `event` field instead of the unstable field from [MSC3083](https://github.com/matrix-org/matrix-doc/pull/3083). ([\#11413](https://github.com/matrix-org/synapse/issues/11413))

|

||||

- Fix a bug introduced in Synapse 1.47.0 where `send_join` could fail due to an outdated `ijson` version. ([\#11439](https://github.com/matrix-org/synapse/issues/11439), [\#11441](https://github.com/matrix-org/synapse/issues/11441), [\#11460](https://github.com/matrix-org/synapse/issues/11460))

|

||||

- Fix a bug introduced in Synapse 1.36.0 which could cause problems fetching event-signing keys from trusted key servers. ([\#11440](https://github.com/matrix-org/synapse/issues/11440))

|

||||

- Fix a bug introduced in Synapse 1.47.1 where the media repository would fail to work if the media store path contained any symbolic links. ([\#11446](https://github.com/matrix-org/synapse/issues/11446))

|

||||

- Fix an `LruCache` corruption bug, introduced in Synapse 1.38.0, that would cause certain requests to fail until the next Synapse restart. ([\#11454](https://github.com/matrix-org/synapse/issues/11454))

|

||||

- Fix a long-standing bug where invites from ignored users were included in incremental syncs. ([\#11511](https://github.com/matrix-org/synapse/issues/11511))

|

||||

- Fix a regression in Synapse 1.48.0 where presence workers would not clear their presence updates over replication on shutdown. ([\#11518](https://github.com/matrix-org/synapse/issues/11518))

|

||||

- Fix a regression in Synapse 1.48.0 where the module API's `looping_background_call` method would spam errors to the logs when given a non-async function. ([\#11524](https://github.com/matrix-org/synapse/issues/11524))

|

||||

|

||||

|

||||

Updates to the Docker image

|

||||

---------------------------

|

||||

|

||||

- Update `Dockerfile-workers` to healthcheck all workers in the container. ([\#11429](https://github.com/matrix-org/synapse/issues/11429))

|

||||

|

||||

|

||||

Improved Documentation

|

||||

----------------------

|

||||

|

||||

- Update the media repository documentation. ([\#11415](https://github.com/matrix-org/synapse/issues/11415))

|

||||

- Update section about backward extremities in the room DAG concepts doc to correct the misconception about backward extremities indicating whether we have fetched an events' `prev_events`. ([\#11469](https://github.com/matrix-org/synapse/issues/11469))

|

||||

|

||||

|

||||

Internal Changes

|

||||

----------------

|

||||

|

||||

- Add `Final` annotation to string constants in `synapse.api.constants` so that they get typed as `Literal`s. ([\#11356](https://github.com/matrix-org/synapse/issues/11356))

|

||||

- Add a check to ensure that users cannot start the Synapse master process when `worker_app` is set. ([\#11416](https://github.com/matrix-org/synapse/issues/11416))

|

||||

- Add a note about postgres memory management and hugepages to postgres doc. ([\#11467](https://github.com/matrix-org/synapse/issues/11467))

|

||||

- Add missing type hints to `synapse.config` module. ([\#11465](https://github.com/matrix-org/synapse/issues/11465))

|

||||

- Add missing type hints to `synapse.federation`. ([\#11483](https://github.com/matrix-org/synapse/issues/11483))

|

||||

- Add type annotations to `tests.storage.test_appservice`. ([\#11488](https://github.com/matrix-org/synapse/issues/11488), [\#11492](https://github.com/matrix-org/synapse/issues/11492))

|

||||

- Add type annotations to some of the configuration surrounding refresh tokens. ([\#11428](https://github.com/matrix-org/synapse/issues/11428))

|

||||

- Add type hints to `synapse/tests/rest/admin`. ([\#11501](https://github.com/matrix-org/synapse/issues/11501))

|

||||

- Add type hints to storage classes. ([\#11411](https://github.com/matrix-org/synapse/issues/11411))

|

||||

- Add wiki pages to documentation website. ([\#11402](https://github.com/matrix-org/synapse/issues/11402))

|

||||

- Clean up `tests.storage.test_main` to remove use of legacy code. ([\#11493](https://github.com/matrix-org/synapse/issues/11493))

|

||||

- Clean up `tests.test_visibility` to remove legacy code. ([\#11495](https://github.com/matrix-org/synapse/issues/11495))

|

||||

- Convert status codes to `HTTPStatus` in `synapse.rest.admin`. ([\#11452](https://github.com/matrix-org/synapse/issues/11452), [\#11455](https://github.com/matrix-org/synapse/issues/11455))

|

||||

- Extend the `scripts-dev/sign_json` script to support signing events. ([\#11486](https://github.com/matrix-org/synapse/issues/11486))

|

||||

- Improve internal types in push code. ([\#11409](https://github.com/matrix-org/synapse/issues/11409))

|

||||

- Improve type annotations in `synapse.module_api`. ([\#11029](https://github.com/matrix-org/synapse/issues/11029))

|

||||

- Improve type hints for `LruCache`. ([\#11453](https://github.com/matrix-org/synapse/issues/11453))

|

||||

- Preparation for database schema simplifications: disambiguate queries on `state_key`. ([\#11497](https://github.com/matrix-org/synapse/issues/11497))

|

||||

- Refactor `backfilled` into specific behavior function arguments (`_persist_events_and_state_updates` and downstream calls). ([\#11417](https://github.com/matrix-org/synapse/issues/11417))

|

||||

- Refactor `get_version_string` to fix-up types and duplicated code. ([\#11468](https://github.com/matrix-org/synapse/issues/11468))

|

||||

- Refactor various parts of the `/sync` handler. ([\#11494](https://github.com/matrix-org/synapse/issues/11494), [\#11515](https://github.com/matrix-org/synapse/issues/11515))

|

||||

- Remove unnecessary `json.dumps` from `tests.rest.admin`. ([\#11461](https://github.com/matrix-org/synapse/issues/11461))

|

||||

- Save the OpenID Connect session ID on login. ([\#11482](https://github.com/matrix-org/synapse/issues/11482))

|

||||

- Update and clean up recently ported documentation pages. ([\#11466](https://github.com/matrix-org/synapse/issues/11466))

|

||||

|

||||

|

||||

Synapse 1.48.0 (2021-11-30)

|

||||

===========================

|

||||

|

||||

|

||||

6

debian/changelog

vendored

6

debian/changelog

vendored

@ -1,9 +1,3 @@

|

||||

matrix-synapse-py3 (1.49.0~rc1) stable; urgency=medium

|

||||

|

||||

* New synapse release 1.49.0~rc1.

|

||||

|

||||

-- Synapse Packaging team <packages@matrix.org> Tue, 07 Dec 2021 13:52:21 +0000

|

||||

|

||||

matrix-synapse-py3 (1.48.0) stable; urgency=medium

|

||||

|

||||

* New synapse release 1.48.0.

|

||||

|

||||

@ -21,6 +21,3 @@ VOLUME ["/data"]

|

||||

# files to run the desired worker configuration. Will start supervisord.

|

||||

COPY ./docker/configure_workers_and_start.py /configure_workers_and_start.py

|

||||

ENTRYPOINT ["/configure_workers_and_start.py"]

|

||||

|

||||

HEALTHCHECK --start-period=5s --interval=15s --timeout=5s \

|

||||

CMD /bin/sh /healthcheck.sh

|

||||

|

||||

@ -1,6 +0,0 @@

|

||||

#!/bin/sh

|

||||

# This healthcheck script is designed to return OK when every

|

||||

# host involved returns OK

|

||||

{%- for healthcheck_url in healthcheck_urls %}

|

||||

curl -fSs {{ healthcheck_url }} || exit 1

|

||||

{%- endfor %}

|

||||

@ -474,16 +474,10 @@ def generate_worker_files(environ, config_path: str, data_dir: str):

|

||||

|

||||

# Determine the load-balancing upstreams to configure

|

||||

nginx_upstream_config = ""

|

||||

|

||||

# At the same time, prepare a list of internal endpoints to healthcheck

|

||||

# starting with the main process which exists even if no workers do.

|

||||

healthcheck_urls = ["http://localhost:8080/health"]

|

||||

|

||||

for upstream_worker_type, upstream_worker_ports in nginx_upstreams.items():

|

||||

body = ""

|

||||

for port in upstream_worker_ports:

|

||||

body += " server localhost:%d;\n" % (port,)

|

||||

healthcheck_urls.append("http://localhost:%d/health" % (port,))

|

||||

|

||||

# Add to the list of configured upstreams

|

||||

nginx_upstream_config += NGINX_UPSTREAM_CONFIG_BLOCK.format(

|

||||

@ -516,13 +510,6 @@ def generate_worker_files(environ, config_path: str, data_dir: str):

|

||||

worker_config=supervisord_config,

|

||||

)

|

||||

|

||||

# healthcheck config

|

||||

convert(

|

||||

"/conf/healthcheck.sh.j2",

|

||||

"/healthcheck.sh",

|

||||

healthcheck_urls=healthcheck_urls,

|

||||

)

|

||||

|

||||

# Ensure the logging directory exists

|

||||

log_dir = data_dir + "/logs"

|

||||

if not os.path.exists(log_dir):

|

||||

|

||||

@ -44,7 +44,6 @@

|

||||

- [Presence router callbacks](modules/presence_router_callbacks.md)

|

||||

- [Account validity callbacks](modules/account_validity_callbacks.md)

|

||||

- [Password auth provider callbacks](modules/password_auth_provider_callbacks.md)

|

||||

- [Background update controller callbacks](modules/background_update_controller_callbacks.md)

|

||||

- [Porting a legacy module to the new interface](modules/porting_legacy_module.md)

|

||||

- [Workers](workers.md)

|

||||

- [Using `synctl` with Workers](synctl_workers.md)

|

||||

@ -65,15 +64,9 @@

|

||||

- [Statistics](admin_api/statistics.md)

|

||||

- [Users](admin_api/user_admin_api.md)

|

||||

- [Server Version](admin_api/version_api.md)

|

||||

- [Federation](usage/administration/admin_api/federation.md)

|

||||

- [Manhole](manhole.md)

|

||||

- [Monitoring](metrics-howto.md)

|

||||

- [Understanding Synapse Through Grafana Graphs](usage/administration/understanding_synapse_through_grafana_graphs.md)

|

||||

- [Useful SQL for Admins](usage/administration/useful_sql_for_admins.md)

|

||||

- [Database Maintenance Tools](usage/administration/database_maintenance_tools.md)

|

||||

- [State Groups](usage/administration/state_groups.md)

|

||||

- [Request log format](usage/administration/request_log.md)

|

||||

- [Admin FAQ](usage/administration/admin_faq.md)

|

||||

- [Scripts]()

|

||||

|

||||

# Development

|

||||

@ -101,4 +94,3 @@

|

||||

|

||||

# Other

|

||||

- [Dependency Deprecation Policy](deprecation_policy.md)

|

||||

- [Running Synapse on a Single-Board Computer](other/running_synapse_on_single_board_computers.md)

|

||||

|

||||

@ -38,15 +38,16 @@ Most-recent-in-time events in the DAG which are not referenced by any other even

|

||||

The forward extremities of a room are used as the `prev_events` when the next event is sent.

|

||||

|

||||

|

||||

## Backward extremity

|

||||

## Backwards extremity

|

||||

|

||||

The current marker of where we have backfilled up to and will generally be the

|

||||

`prev_events` of the oldest-in-time events we have in the DAG. This gives a starting point when

|

||||

backfilling history.

|

||||

oldest-in-time events we know of in the DAG.

|

||||

|

||||

When we persist a non-outlier event, we clear it as a backward extremity and set

|

||||

all of its `prev_events` as the new backward extremities if they aren't already

|

||||

persisted in the `events` table.

|

||||

This is an event where we haven't fetched all of the `prev_events` for.

|

||||

|

||||

Once we have fetched all of its `prev_events`, it's unmarked as a backwards

|

||||

extremity (although we may have formed new backwards extremities from the prev

|

||||

events during the backfilling process).

|

||||

|

||||

|

||||

## Outliers

|

||||

@ -55,7 +56,8 @@ We mark an event as an `outlier` when we haven't figured out the state for the

|

||||

room at that point in the DAG yet.

|

||||

|

||||

We won't *necessarily* have the `prev_events` of an `outlier` in the database,

|

||||

but it's entirely possible that we *might*.

|

||||

but it's entirely possible that we *might*. The status of whether we have all of

|

||||

the `prev_events` is marked as a [backwards extremity](#backwards-extremity).

|

||||

|

||||

For example, when we fetch the event auth chain or state for a given event, we

|

||||

mark all of those claimed auth events as outliers because we haven't done the

|

||||

|

||||

@ -2,80 +2,29 @@

|

||||

|

||||

*Synapse implementation-specific details for the media repository*

|

||||

|

||||

The media repository

|

||||

* stores avatars, attachments and their thumbnails for media uploaded by local

|

||||

users.

|

||||

* caches avatars, attachments and their thumbnails for media uploaded by remote

|

||||

users.

|

||||

* caches resources and thumbnails used for

|

||||

[URL previews](development/url_previews.md).

|

||||

The media repository is where attachments and avatar photos are stored.

|

||||

It stores attachment content and thumbnails for media uploaded by local users.

|

||||

It caches attachment content and thumbnails for media uploaded by remote users.

|

||||

|

||||

All media in Matrix can be identified by a unique

|

||||

[MXC URI](https://spec.matrix.org/latest/client-server-api/#matrix-content-mxc-uris),

|

||||

consisting of a server name and media ID:

|

||||

```

|

||||

mxc://<server-name>/<media-id>

|

||||

```

|

||||

## Storage

|

||||

|

||||

## Local Media

|

||||

Synapse generates 24 character media IDs for content uploaded by local users.

|

||||

These media IDs consist of upper and lowercase letters and are case-sensitive.

|

||||

Other homeserver implementations may generate media IDs differently.

|

||||

Each item of media is assigned a `media_id` when it is uploaded.

|

||||

The `media_id` is a randomly chosen, URL safe 24 character string.

|

||||

|

||||

Local media is recorded in the `local_media_repository` table, which includes

|

||||

metadata such as MIME types, upload times and file sizes.

|

||||

Note that this table is shared by the URL cache, which has a different media ID

|

||||

scheme.

|

||||

Metadata such as the MIME type, upload time and length are stored in the

|

||||

sqlite3 database indexed by `media_id`.

|

||||

|

||||

### Paths

|

||||

A file with media ID `aabbcccccccccccccccccccc` and its `128x96` `image/jpeg`

|

||||

thumbnail, created by scaling, would be stored at:

|

||||

```

|

||||

local_content/aa/bb/cccccccccccccccccccc

|

||||

local_thumbnails/aa/bb/cccccccccccccccccccc/128-96-image-jpeg-scale

|

||||

```

|

||||

Content is stored on the filesystem under a `"local_content"` directory.

|

||||

|

||||

## Remote Media

|

||||

When media from a remote homeserver is requested from Synapse, it is assigned

|

||||

a local `filesystem_id`, with the same format as locally-generated media IDs,

|

||||

as described above.

|

||||

Thumbnails are stored under a `"local_thumbnails"` directory.

|

||||

|

||||

A record of remote media is stored in the `remote_media_cache` table, which

|

||||

can be used to map remote MXC URIs (server names and media IDs) to local

|

||||

`filesystem_id`s.

|

||||

The item with `media_id` `"aabbccccccccdddddddddddd"` is stored under

|

||||

`"local_content/aa/bb/ccccccccdddddddddddd"`. Its thumbnail with width

|

||||

`128` and height `96` and type `"image/jpeg"` is stored under

|

||||

`"local_thumbnails/aa/bb/ccccccccdddddddddddd/128-96-image-jpeg"`

|

||||

|

||||

### Paths

|

||||

A file from `matrix.org` with `filesystem_id` `aabbcccccccccccccccccccc` and its

|

||||

`128x96` `image/jpeg` thumbnail, created by scaling, would be stored at:

|

||||

```

|

||||

remote_content/matrix.org/aa/bb/cccccccccccccccccccc

|

||||

remote_thumbnail/matrix.org/aa/bb/cccccccccccccccccccc/128-96-image-jpeg-scale

|

||||

```

|

||||

Older thumbnails may omit the thumbnailing method:

|

||||

```

|

||||

remote_thumbnail/matrix.org/aa/bb/cccccccccccccccccccc/128-96-image-jpeg

|

||||

```

|

||||

|

||||

Note that `remote_thumbnail/` does not have an `s`.

|

||||

|

||||

## URL Previews

|

||||

See [URL Previews](development/url_previews.md) for documentation on the URL preview

|

||||

process.

|

||||

|

||||

When generating previews for URLs, Synapse may download and cache various

|

||||

resources, including images. These resources are assigned temporary media IDs

|

||||

of the form `yyyy-mm-dd_aaaaaaaaaaaaaaaa`, where `yyyy-mm-dd` is the current

|

||||

date and `aaaaaaaaaaaaaaaa` is a random sequence of 16 case-sensitive letters.

|

||||

|

||||

The metadata for these cached resources is stored in the

|

||||

`local_media_repository` and `local_media_repository_url_cache` tables.

|

||||

|

||||

Resources for URL previews are deleted after a few days.

|

||||

|

||||

### Paths

|

||||

The file with media ID `yyyy-mm-dd_aaaaaaaaaaaaaaaa` and its `128x96`

|

||||

`image/jpeg` thumbnail, created by scaling, would be stored at:

|

||||

```

|

||||

url_cache/yyyy-mm-dd/aaaaaaaaaaaaaaaa

|

||||

url_cache_thumbnails/yyyy-mm-dd/aaaaaaaaaaaaaaaa/128-96-image-jpeg-scale

|

||||

```

|

||||

Remote content is cached under `"remote_content"` directory. Each item of

|

||||

remote content is assigned a local `"filesystem_id"` to ensure that the

|

||||

directory structure `"remote_content/server_name/aa/bb/ccccccccdddddddddddd"`

|

||||

is appropriate. Thumbnails for remote content are stored under

|

||||

`"remote_thumbnail/server_name/..."`

|

||||

|

||||

@ -1,71 +0,0 @@

|

||||

# Background update controller callbacks

|

||||

|

||||

Background update controller callbacks allow module developers to control (e.g. rate-limit)

|

||||

how database background updates are run. A database background update is an operation

|

||||

Synapse runs on its database in the background after it starts. It's usually used to run

|

||||

database operations that would take too long if they were run at the same time as schema

|

||||

updates (which are run on startup) and delay Synapse's startup too much: populating a

|

||||

table with a big amount of data, adding an index on a big table, deleting superfluous data,

|

||||

etc.

|

||||

|

||||

Background update controller callbacks can be registered using the module API's

|

||||

`register_background_update_controller_callbacks` method. Only the first module (in order

|

||||

of appearance in Synapse's configuration file) calling this method can register background

|

||||

update controller callbacks, subsequent calls are ignored.

|

||||

|

||||

The available background update controller callbacks are:

|

||||

|

||||

### `on_update`

|

||||

|

||||

_First introduced in Synapse v1.49.0_

|

||||

|

||||

```python

|

||||

def on_update(update_name: str, database_name: str, one_shot: bool) -> AsyncContextManager[int]

|

||||

```

|

||||

|

||||

Called when about to do an iteration of a background update. The module is given the name

|

||||

of the update, the name of the database, and a flag to indicate whether the background

|

||||

update will happen in one go and may take a long time (e.g. creating indices). If this last

|

||||

argument is set to `False`, the update will be run in batches.

|

||||

|

||||

The module must return an async context manager. It will be entered before Synapse runs a

|

||||

background update; this should return the desired duration of the iteration, in

|

||||

milliseconds.

|

||||

|

||||

The context manager will be exited when the iteration completes. Note that the duration

|

||||

returned by the context manager is a target, and an iteration may take substantially longer

|

||||

or shorter. If the `one_shot` flag is set to `True`, the duration returned is ignored.

|

||||

|

||||

__Note__: Unlike most module callbacks in Synapse, this one is _synchronous_. This is

|

||||

because asynchronous operations are expected to be run by the async context manager.

|

||||

|

||||

This callback is required when registering any other background update controller callback.

|

||||

|

||||

### `default_batch_size`

|

||||

|

||||

_First introduced in Synapse v1.49.0_

|

||||

|

||||

```python

|

||||

async def default_batch_size(update_name: str, database_name: str) -> int

|

||||

```

|

||||

|

||||

Called before the first iteration of a background update, with the name of the update and

|

||||

of the database. The module must return the number of elements to process in this first

|

||||

iteration.

|

||||

|

||||

If this callback is not defined, Synapse will use a default value of 100.

|

||||

|

||||

### `min_batch_size`

|

||||

|

||||

_First introduced in Synapse v1.49.0_

|

||||

|

||||

```python

|

||||

async def min_batch_size(update_name: str, database_name: str) -> int

|

||||

```

|

||||

|

||||

Called before running a new batch for a background update, with the name of the update and

|

||||

of the database. The module must return an integer representing the minimum number of

|

||||

elements to process in this iteration. This number must be at least 1, and is used to

|

||||

ensure that progress is always made.

|

||||

|

||||

If this callback is not defined, Synapse will use a default value of 100.

|

||||

@ -71,15 +71,15 @@ Modules **must** register their web resources in their `__init__` method.

|

||||

## Registering a callback

|

||||

|

||||

Modules can use Synapse's module API to register callbacks. Callbacks are functions that

|

||||

Synapse will call when performing specific actions. Callbacks must be asynchronous (unless

|

||||

specified otherwise), and are split in categories. A single module may implement callbacks

|

||||

from multiple categories, and is under no obligation to implement all callbacks from the

|

||||

categories it registers callbacks for.

|

||||

Synapse will call when performing specific actions. Callbacks must be asynchronous, and

|

||||

are split in categories. A single module may implement callbacks from multiple categories,

|

||||

and is under no obligation to implement all callbacks from the categories it registers

|

||||

callbacks for.

|

||||

|

||||

Modules can register callbacks using one of the module API's `register_[...]_callbacks`

|

||||

methods. The callback functions are passed to these methods as keyword arguments, with

|

||||

the callback name as the argument name and the function as its value. A

|

||||

`register_[...]_callbacks` method exists for each category.

|

||||

the callback name as the argument name and the function as its value. This is demonstrated

|

||||

in the example below. A `register_[...]_callbacks` method exists for each category.

|

||||

|

||||

Callbacks for each category can be found on their respective page of the

|

||||

[Synapse documentation website](https://matrix-org.github.io/synapse).

|

||||

@ -83,7 +83,7 @@ oidc_providers:

|

||||

|

||||

### Dex

|

||||

|

||||

[Dex][dex-idp] is a simple, open-source OpenID Connect Provider.

|

||||

[Dex][dex-idp] is a simple, open-source, certified OpenID Connect Provider.

|

||||

Although it is designed to help building a full-blown provider with an

|

||||

external database, it can be configured with static passwords in a config file.

|

||||

|

||||

@ -523,7 +523,7 @@ The synapse config will look like this:

|

||||

email_template: "{{ user.email }}"

|

||||

```

|

||||

|

||||

### Django OAuth Toolkit

|

||||

## Django OAuth Toolkit

|

||||

|

||||

[django-oauth-toolkit](https://github.com/jazzband/django-oauth-toolkit) is a

|

||||

Django application providing out of the box all the endpoints, data and logic

|

||||

|

||||

@ -1,74 +0,0 @@

|

||||

## Summary of performance impact of running on resource constrained devices such as SBCs

|

||||

|

||||

I've been running my homeserver on a cubietruck at home now for some time and am often replying to statements like "you need loads of ram to join large rooms" with "it works fine for me". I thought it might be useful to curate a summary of the issues you're likely to run into to help as a scaling-down guide, maybe highlight these for development work or end up as documentation. It seems that once you get up to about 4x1.5GHz arm64 4GiB these issues are no longer a problem.

|

||||

|

||||

- **Platform**: 2x1GHz armhf 2GiB ram [Single-board computers](https://wiki.debian.org/CheapServerBoxHardware), SSD, postgres.

|

||||

|

||||

### Presence

|

||||

|

||||

This is the main reason people have a poor matrix experience on resource constrained homeservers. Element web will frequently be saying the server is offline while the python process will be pegged at 100% cpu. This feature is used to tell when other users are active (have a client app in the foreground) and therefore more likely to respond, but requires a lot of network activity to maintain even when nobody is talking in a room.

|

||||

|

||||

|

||||

|

||||

While synapse does have some performance issues with presence [#3971](https://github.com/matrix-org/synapse/issues/3971), the fundamental problem is that this is an easy feature to implement for a centralised service at nearly no overhead, but federation makes it combinatorial [#8055](https://github.com/matrix-org/synapse/issues/8055). There is also a client-side config option which disables the UI and idle tracking [enable_presence_by_hs_url] to blacklist the largest instances but I didn't notice much difference, so I recommend disabling the feature entirely at the server level as well.

|

||||

|

||||

[enable_presence_by_hs_url]: https://github.com/vector-im/element-web/blob/v1.7.8/config.sample.json#L45

|

||||

|

||||

### Joining

|

||||

|

||||

Joining a "large", federated room will initially fail with the below message in Element web, but waiting a while (10-60mins) and trying again will succeed without any issue. What counts as "large" is not message history, user count, connections to homeservers or even a simple count of the state events, it is instead how long the state resolution algorithm takes. However, each of those numbers are reasonable proxies, so we can use them as estimates since user count is one of the few things you see before joining.

|

||||

|

||||

|

||||

|

||||

This is [#1211](https://github.com/matrix-org/synapse/issues/1211) and will also hopefully be mitigated by peeking [matrix-org/matrix-doc#2753](https://github.com/matrix-org/matrix-doc/pull/2753) so at least you don't need to wait for a join to complete before finding out if it's the kind of room you want. Note that you should first disable presence, otherwise it'll just make the situation worse [#3120](https://github.com/matrix-org/synapse/issues/3120). There is a lot of database interaction too, so make sure you've [migrated your data](../postgres.md) from the default sqlite to postgresql. Personally, I recommend patience - once the initial join is complete there's rarely any issues with actually interacting with the room, but if you like you can just block "large" rooms entirely.

|

||||

|

||||

### Sessions

|

||||

|

||||

Anything that requires modifying the device list [#7721](https://github.com/matrix-org/synapse/issues/7721) will take a while to propagate, again taking the client "Offline" until it's complete. This includes signing in and out, editing the public name and verifying e2ee. The main mitigation I recommend is to keep long-running sessions open e.g. by using Firefox SSB "Use this site in App mode" or Chromium PWA "Install Element".

|

||||

|

||||

### Recommended configuration

|

||||

|

||||

Put the below in a new file at /etc/matrix-synapse/conf.d/sbc.yaml to override the defaults in homeserver.yaml.

|

||||

|

||||

```

|

||||

# Set to false to disable presence tracking on this homeserver.

|

||||

use_presence: false

|

||||

|

||||

# When this is enabled, the room "complexity" will be checked before a user

|

||||

# joins a new remote room. If it is above the complexity limit, the server will

|

||||

# disallow joining, or will instantly leave.

|

||||

limit_remote_rooms:

|

||||

# Uncomment to enable room complexity checking.

|

||||

#enabled: true

|

||||

complexity: 3.0

|

||||

|

||||

# Database configuration

|

||||

database:

|

||||

name: psycopg2

|

||||

args:

|

||||

user: matrix-synapse

|

||||

# Generate a long, secure one with a password manager

|

||||

password: hunter2

|

||||

database: matrix-synapse

|

||||

host: localhost

|

||||

cp_min: 5

|

||||

cp_max: 10

|

||||

```

|

||||

|

||||

Currently the complexity is measured by [current_state_events / 500](https://github.com/matrix-org/synapse/blob/v1.20.1/synapse/storage/databases/main/events_worker.py#L986). You can find join times and your most complex rooms like this:

|

||||

|

||||

```

|

||||

admin@homeserver:~$ zgrep '/client/r0/join/' /var/log/matrix-synapse/homeserver.log* | awk '{print $18, $25}' | sort --human-numeric-sort

|

||||

29.922sec/-0.002sec /_matrix/client/r0/join/%23debian-fasttrack%3Apoddery.com

|

||||

182.088sec/0.003sec /_matrix/client/r0/join/%23decentralizedweb-general%3Amatrix.org

|

||||

911.625sec/-570.847sec /_matrix/client/r0/join/%23synapse%3Amatrix.org

|

||||

|

||||

admin@homeserver:~$ sudo --user postgres psql matrix-synapse --command 'select canonical_alias, joined_members, current_state_events from room_stats_state natural join room_stats_current where canonical_alias is not null order by current_state_events desc fetch first 5 rows only'

|

||||

canonical_alias | joined_members | current_state_events

|

||||

-------------------------------+----------------+----------------------

|

||||

#_oftc_#debian:matrix.org | 871 | 52355

|

||||

#matrix:matrix.org | 6379 | 10684

|

||||

#irc:matrix.org | 461 | 3751

|

||||

#decentralizedweb-general:matrix.org | 997 | 1509

|

||||

#whatsapp:maunium.net | 554 | 854

|

||||

```

|

||||

@ -118,9 +118,6 @@ performance:

|

||||

Note that the appropriate values for those fields depend on the amount

|

||||

of free memory the database host has available.

|

||||

|

||||

Additionally, admins of large deployments might want to consider using huge pages

|

||||

to help manage memory, especially when using large values of `shared_buffers`. You

|

||||

can read more about that [here](https://www.postgresql.org/docs/10/kernel-resources.html#LINUX-HUGE-PAGES).

|

||||

|

||||

## Porting from SQLite

|

||||

|

||||

|

||||

@ -1209,44 +1209,6 @@ oembed:

|

||||

#

|

||||

#session_lifetime: 24h

|

||||

|

||||

# Time that an access token remains valid for, if the session is

|

||||

# using refresh tokens.

|

||||

# For more information about refresh tokens, please see the manual.

|

||||

# Note that this only applies to clients which advertise support for

|

||||

# refresh tokens.

|

||||

#

|

||||

# Note also that this is calculated at login time and refresh time:

|

||||

# changes are not applied to existing sessions until they are refreshed.

|

||||

#

|

||||

# By default, this is 5 minutes.

|

||||

#

|

||||

#refreshable_access_token_lifetime: 5m

|

||||

|

||||

# Time that a refresh token remains valid for (provided that it is not

|

||||

# exchanged for another one first).

|

||||

# This option can be used to automatically log-out inactive sessions.

|

||||

# Please see the manual for more information.

|

||||

#

|

||||

# Note also that this is calculated at login time and refresh time:

|

||||

# changes are not applied to existing sessions until they are refreshed.

|

||||

#

|

||||

# By default, this is infinite.

|

||||

#

|

||||

#refresh_token_lifetime: 24h

|

||||

|

||||

# Time that an access token remains valid for, if the session is NOT

|

||||

# using refresh tokens.

|

||||

# Please note that not all clients support refresh tokens, so setting

|

||||

# this to a short value may be inconvenient for some users who will

|

||||

# then be logged out frequently.

|

||||

#

|

||||

# Note also that this is calculated at login time: changes are not applied

|

||||

# retrospectively to existing sessions for users that have already logged in.

|

||||

#

|

||||

# By default, this is infinite.

|

||||

#

|

||||

#nonrefreshable_access_token_lifetime: 24h

|

||||

|

||||

# The user must provide all of the below types of 3PID when registering.

|

||||

#

|

||||

#registrations_require_3pid:

|

||||

|

||||

@ -71,12 +71,7 @@ Below are the templates Synapse will look for when generating the content of an

|

||||

* `sender_avatar_url`: the avatar URL (as a `mxc://` URL) for the event's

|

||||

sender

|

||||

* `sender_hash`: a hash of the user ID of the sender

|

||||

* `msgtype`: the type of the message

|

||||

* `body_text_html`: html representation of the message

|

||||

* `body_text_plain`: plaintext representation of the message

|

||||

* `image_url`: mxc url of an image, when "msgtype" is "m.image"

|

||||

* `link`: a `matrix.to` link to the room

|

||||

* `avator_url`: url to the room's avator

|

||||

* `reason`: information on the event that triggered the email to be sent. It's an

|

||||

object with the following attributes:

|

||||

* `room_id`: the ID of the room the event was sent in

|

||||

|

||||

@ -1,114 +0,0 @@

|

||||

# Federation API

|

||||

|

||||

This API allows a server administrator to manage Synapse's federation with other homeservers.

|

||||

|

||||

Note: This API is new, experimental and "subject to change".

|

||||

|

||||

## List of destinations

|

||||

|

||||

This API gets the current destination retry timing info for all remote servers.

|

||||

|

||||

The list contains all the servers with which the server federates,

|

||||

regardless of whether an error occurred or not.

|

||||

If an error occurs, it may take up to 20 minutes for the error to be displayed here,

|

||||

as a complete retry must have failed.

|

||||

|

||||

The API is:

|

||||

|

||||

A standard request with no filtering:

|

||||

|

||||

```

|

||||

GET /_synapse/admin/v1/federation/destinations

|

||||

```

|

||||

|

||||

A response body like the following is returned:

|

||||

|

||||

```json

|

||||

{

|

||||

"destinations":[

|

||||

{

|

||||

"destination": "matrix.org",

|

||||

"retry_last_ts": 1557332397936,

|

||||

"retry_interval": 3000000,

|

||||

"failure_ts": 1557329397936,

|

||||

"last_successful_stream_ordering": null

|

||||

}

|

||||

],

|

||||

"total": 1

|

||||

}

|

||||

```

|

||||

|

||||

To paginate, check for `next_token` and if present, call the endpoint again

|

||||

with `from` set to the value of `next_token`. This will return a new page.

|

||||

|

||||

If the endpoint does not return a `next_token` then there are no more destinations

|

||||

to paginate through.

|

||||

|

||||

**Parameters**

|

||||

|

||||

The following query parameters are available:

|

||||

|

||||

- `from` - Offset in the returned list. Defaults to `0`.

|

||||

- `limit` - Maximum amount of destinations to return. Defaults to `100`.

|

||||

- `order_by` - The method in which to sort the returned list of destinations.

|

||||

Valid values are:

|

||||

- `destination` - Destinations are ordered alphabetically by remote server name.

|

||||

This is the default.

|

||||

- `retry_last_ts` - Destinations are ordered by time of last retry attempt in ms.

|

||||

- `retry_interval` - Destinations are ordered by how long until next retry in ms.

|

||||

- `failure_ts` - Destinations are ordered by when the server started failing in ms.

|

||||

- `last_successful_stream_ordering` - Destinations are ordered by the stream ordering

|

||||

of the most recent successfully-sent PDU.

|

||||

- `dir` - Direction of room order. Either `f` for forwards or `b` for backwards. Setting

|

||||

this value to `b` will reverse the above sort order. Defaults to `f`.

|

||||

|

||||

*Caution:* The database only has an index on the column `destination`.

|

||||

This means that if a different sort order is used,

|

||||

this can cause a large load on the database, especially for large environments.

|

||||

|

||||

**Response**

|

||||

|

||||

The following fields are returned in the JSON response body:

|

||||

|

||||

- `destinations` - An array of objects, each containing information about a destination.

|

||||

Destination objects contain the following fields:

|

||||

- `destination` - string - Name of the remote server to federate.

|

||||

- `retry_last_ts` - integer - The last time Synapse tried and failed to reach the

|

||||

remote server, in ms. This is `0` if the last attempt to communicate with the

|

||||

remote server was successful.

|

||||

- `retry_interval` - integer - How long since the last time Synapse tried to reach

|

||||

the remote server before trying again, in ms. This is `0` if no further retrying occuring.

|

||||

- `failure_ts` - nullable integer - The first time Synapse tried and failed to reach the

|

||||

remote server, in ms. This is `null` if communication with the remote server has never failed.

|

||||

- `last_successful_stream_ordering` - nullable integer - The stream ordering of the most

|

||||

recent successfully-sent [PDU](understanding_synapse_through_grafana_graphs.md#federation)

|

||||

to this destination, or `null` if this information has not been tracked yet.

|

||||

- `next_token`: string representing a positive integer - Indication for pagination. See above.

|

||||

- `total` - integer - Total number of destinations.

|

||||

|

||||

# Destination Details API

|

||||

|

||||

This API gets the retry timing info for a specific remote server.

|

||||

|

||||

The API is:

|

||||

|

||||

```

|

||||

GET /_synapse/admin/v1/federation/destinations/<destination>

|

||||

```

|

||||

|

||||

A response body like the following is returned:

|

||||

|

||||

```json

|

||||

{

|

||||

"destination": "matrix.org",

|

||||

"retry_last_ts": 1557332397936,

|

||||

"retry_interval": 3000000,

|

||||

"failure_ts": 1557329397936,

|

||||

"last_successful_stream_ordering": null

|

||||

}

|

||||

```

|

||||

|

||||

**Response**

|

||||

|

||||

The response fields are the same like in the `destinations` array in

|

||||

[List of destinations](#list-of-destinations) response.

|

||||

@ -1,103 +0,0 @@

|

||||

## Admin FAQ

|

||||

|

||||

How do I become a server admin?

|

||||

---

|

||||

If your server already has an admin account you should use the user admin API to promote other accounts to become admins. See [User Admin API](../../admin_api/user_admin_api.md#Change-whether-a-user-is-a-server-administrator-or-not)

|

||||

|

||||

If you don't have any admin accounts yet you won't be able to use the admin API so you'll have to edit the database manually. Manually editing the database is generally not recommended so once you have an admin account, use the admin APIs to make further changes.

|

||||

|

||||

```sql

|

||||

UPDATE users SET admin = 1 WHERE name = '@foo:bar.com';

|

||||

```

|

||||

What servers are my server talking to?

|

||||

---

|

||||

Run this sql query on your db:

|

||||

```sql

|

||||

SELECT * FROM destinations;

|

||||

```

|

||||

|

||||

What servers are currently participating in this room?

|

||||

---

|

||||

Run this sql query on your db:

|

||||

```sql

|

||||

SELECT DISTINCT split_part(state_key, ':', 2)

|

||||

FROM current_state_events AS c

|

||||

INNER JOIN room_memberships AS m USING (room_id, event_id)

|

||||

WHERE room_id = '!cURbafjkfsMDVwdRDQ:matrix.org' AND membership = 'join';

|

||||

```

|

||||

|

||||

What users are registered on my server?

|

||||

---

|

||||

```sql

|

||||

SELECT NAME from users;

|

||||

```

|

||||

|

||||

Manually resetting passwords:

|

||||

---

|

||||

See https://github.com/matrix-org/synapse/blob/master/README.rst#password-reset

|

||||

|

||||

I have a problem with my server. Can I just delete my database and start again?

|

||||

---

|

||||

Deleting your database is unlikely to make anything better.

|

||||

|

||||

It's easy to make the mistake of thinking that you can start again from a clean slate by dropping your database, but things don't work like that in a federated network: lots of other servers have information about your server.

|

||||

|

||||

For example: other servers might think that you are in a room, your server will think that you are not, and you'll probably be unable to interact with that room in a sensible way ever again.

|

||||

|

||||

In general, there are better solutions to any problem than dropping the database. Come and seek help in https://matrix.to/#/#synapse:matrix.org.

|

||||

|

||||

There are two exceptions when it might be sensible to delete your database and start again:

|

||||

* You have *never* joined any rooms which are federated with other servers. For instance, a local deployment which the outside world can't talk to.

|

||||

* You are changing the `server_name` in the homeserver configuration. In effect this makes your server a completely new one from the point of view of the network, so in this case it makes sense to start with a clean database.

|

||||

(In both cases you probably also want to clear out the media_store.)

|

||||

|

||||

I've stuffed up access to my room, how can I delete it to free up the alias?

|

||||

---

|

||||

Using the following curl command:

|

||||

```

|

||||

curl -H 'Authorization: Bearer <access-token>' -X DELETE https://matrix.org/_matrix/client/r0/directory/room/<room-alias>

|

||||

```

|

||||

`<access-token>` - can be obtained in riot by looking in the riot settings, down the bottom is:

|

||||

Access Token:\<click to reveal\>

|

||||

|

||||

`<room-alias>` - the room alias, eg. #my_room:matrix.org this possibly needs to be URL encoded also, for example %23my_room%3Amatrix.org

|

||||

|

||||

How can I find the lines corresponding to a given HTTP request in my homeserver log?

|

||||

---

|

||||

|

||||

Synapse tags each log line according to the HTTP request it is processing. When it finishes processing each request, it logs a line containing the words `Processed request: `. For example:

|

||||

|

||||

```

|

||||

2019-02-14 22:35:08,196 - synapse.access.http.8008 - 302 - INFO - GET-37 - ::1 - 8008 - {@richvdh:localhost} Processed request: 0.173sec/0.001sec (0.002sec, 0.000sec) (0.027sec/0.026sec/2) 687B 200 "GET /_matrix/client/r0/sync HTTP/1.1" "Mozilla/5.0 (X11; Linux x86_64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/69.0.3497.100 Safari/537.36" [0 dbevts]"

|

||||

```

|

||||

|

||||

Here we can see that the request has been tagged with `GET-37`. (The tag depends on the method of the HTTP request, so might start with `GET-`, `PUT-`, `POST-`, `OPTIONS-` or `DELETE-`.) So to find all lines corresponding to this request, we can do:

|

||||

|

||||

```

|

||||

grep 'GET-37' homeserver.log

|

||||

```

|

||||

|

||||

If you want to paste that output into a github issue or matrix room, please remember to surround it with triple-backticks (```) to make it legible (see https://help.github.com/en/articles/basic-writing-and-formatting-syntax#quoting-code).

|

||||

|

||||

|

||||

What do all those fields in the 'Processed' line mean?

|

||||

---

|

||||

See [Request log format](request_log.md).

|

||||

|

||||

|

||||

What are the biggest rooms on my server?

|

||||

---

|

||||

|

||||

```sql

|

||||

SELECT s.canonical_alias, g.room_id, count(*) AS num_rows

|

||||

FROM

|

||||

state_groups_state AS g,

|

||||

room_stats_state AS s

|

||||

WHERE g.room_id = s.room_id

|

||||

GROUP BY s.canonical_alias, g.room_id

|

||||

ORDER BY num_rows desc

|

||||

LIMIT 10;

|

||||

```

|

||||

|

||||

You can also use the [List Room API](../../admin_api/rooms.md#list-room-api)

|

||||

and `order_by` `state_events`.

|

||||

@ -1,18 +0,0 @@

|

||||

This blog post by Victor Berger explains how to use many of the tools listed on this page: https://levans.fr/shrink-synapse-database.html

|

||||

|

||||

# List of useful tools and scripts for maintenance Synapse database:

|

||||

|

||||

## [Purge Remote Media API](../../admin_api/media_admin_api.md#purge-remote-media-api)

|

||||

The purge remote media API allows server admins to purge old cached remote media.

|

||||

|

||||

## [Purge Local Media API](../../admin_api/media_admin_api.md#delete-local-media)

|

||||

This API deletes the *local* media from the disk of your own server.

|

||||

|

||||

## [Purge History API](../../admin_api/purge_history_api.md)

|

||||

The purge history API allows server admins to purge historic events from their database, reclaiming disk space.

|

||||

|

||||

## [synapse-compress-state](https://github.com/matrix-org/rust-synapse-compress-state)

|

||||

Tool for compressing (deduplicating) `state_groups_state` table.

|

||||

|

||||

## [SQL for analyzing Synapse PostgreSQL database stats](useful_sql_for_admins.md)

|

||||

Some easy SQL that reports useful stats about your Synapse database.

|

||||

@ -1,25 +0,0 @@

|

||||

# How do State Groups work?

|

||||

|

||||

As a general rule, I encourage people who want to understand the deepest darkest secrets of the database schema to drop by #synapse-dev:matrix.org and ask questions.

|

||||

|

||||

However, one question that comes up frequently is that of how "state groups" work, and why the `state_groups_state` table gets so big, so here's an attempt to answer that question.

|

||||

|

||||

We need to be able to relatively quickly calculate the state of a room at any point in that room's history. In other words, we need to know the state of the room at each event in that room. This is done as follows:

|

||||

|

||||

A sequence of events where the state is the same are grouped together into a `state_group`; the mapping is recorded in `event_to_state_groups`. (Technically speaking, since a state event usually changes the state in the room, we are recording the state of the room *after* the given event id: which is to say, to a handwavey simplification, the first event in a state group is normally a state event, and others in the same state group are normally non-state-events.)

|

||||

|

||||

`state_groups` records, for each state group, the id of the room that we're looking at, and also the id of the first event in that group. (I'm not sure if that event id is used much in practice.)

|

||||

|

||||

Now, if we stored all the room state for each `state_group`, that would be a huge amount of data. Instead, for each state group, we normally store the difference between the state in that group and some other state group, and only occasionally (every 100 state changes or so) record the full state.

|

||||

|

||||

So, most state groups have an entry in `state_group_edges` (don't ask me why it's not a column in `state_groups`) which records the previous state group in the room, and `state_groups_state` records the differences in state since that previous state group.

|

||||

|

||||

A full state group just records the event id for each piece of state in the room at that point.

|

||||

|

||||

## Known bugs with state groups

|

||||

|

||||

There are various reasons that we can end up creating many more state groups than we need: see https://github.com/matrix-org/synapse/issues/3364 for more details.

|

||||

|

||||

## Compression tool

|

||||

|

||||

There is a tool at https://github.com/matrix-org/rust-synapse-compress-state which can compress the `state_groups_state` on a room by-room basis (essentially, it reduces the number of "full" state groups). This can result in dramatic reductions of the storage used.

|

||||

@ -1,84 +0,0 @@

|

||||

## Understanding Synapse through Grafana graphs

|

||||

|

||||

It is possible to monitor much of the internal state of Synapse using [Prometheus](https://prometheus.io)

|

||||

metrics and [Grafana](https://grafana.com/).

|

||||

A guide for configuring Synapse to provide metrics is available [here](../../metrics-howto.md)

|

||||

and information on setting up Grafana is [here](https://github.com/matrix-org/synapse/tree/master/contrib/grafana).

|

||||

In this setup, Prometheus will periodically scrape the information Synapse provides and

|

||||

store a record of it over time. Grafana is then used as an interface to query and

|

||||

present this information through a series of pretty graphs.

|

||||

|

||||

Once you have grafana set up, and assuming you're using [our grafana dashboard template](https://github.com/matrix-org/synapse/blob/master/contrib/grafana/synapse.json), look for the following graphs when debugging a slow/overloaded Synapse:

|

||||

|

||||

## Message Event Send Time

|

||||

|

||||

|

||||

|

||||

This, along with the CPU and Memory graphs, is a good way to check the general health of your Synapse instance. It represents how long it takes for a user on your homeserver to send a message.

|

||||

|

||||

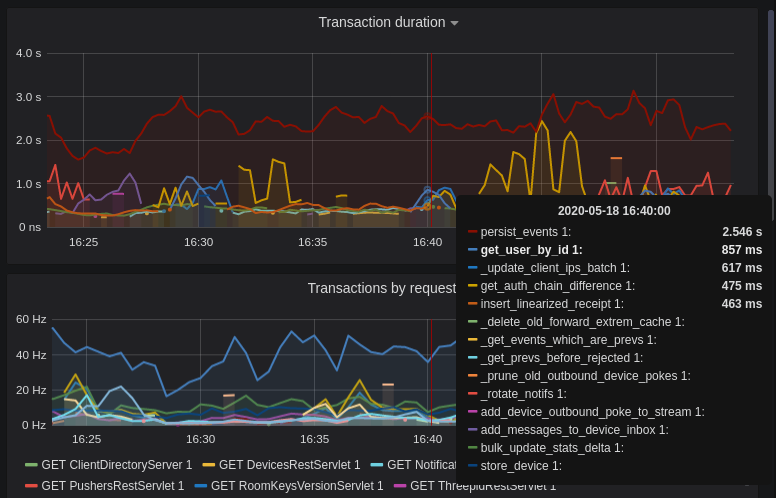

## Transaction Count and Transaction Duration

|

||||

|

||||

|

||||

|

||||

|

||||

|

||||

These graphs show the database transactions that are occurring the most frequently, as well as those are that are taking the most amount of time to execute.

|

||||

|

||||

|

||||

|

||||

In the first graph, we can see obvious spikes corresponding to lots of `get_user_by_id` transactions. This would be useful information to figure out which part of the Synapse codebase is potentially creating a heavy load on the system. However, be sure to cross-reference this with Transaction Duration, which states that `get_users_by_id` is actually a very quick database transaction and isn't causing as much load as others, like `persist_events`:

|

||||

|

||||

|

||||

|

||||

Still, it's probably worth investigating why we're getting users from the database that often, and whether it's possible to reduce the amount of queries we make by adjusting our cache factor(s).

|

||||

|

||||

The `persist_events` transaction is responsible for saving new room events to the Synapse database, so can often show a high transaction duration.

|

||||

|

||||

## Federation

|

||||

|

||||

The charts in the "Federation" section show information about incoming and outgoing federation requests. Federation data can be divided into two basic types:

|

||||

|

||||

- PDU (Persistent Data Unit) - room events: messages, state events (join/leave), etc. These are permanently stored in the database.

|

||||

- EDU (Ephemeral Data Unit) - other data, which need not be stored permanently, such as read receipts, typing notifications.

|

||||

|

||||

The "Outgoing EDUs by type" chart shows the EDUs within outgoing federation requests by type: `m.device_list_update`, `m.direct_to_device`, `m.presence`, `m.receipt`, `m.typing`.

|

||||

|

||||

If you see a large number of `m.presence` EDUs and are having trouble with too much CPU load, you can disable `presence` in the Synapse config. See also [#3971](https://github.com/matrix-org/synapse/issues/3971).

|

||||

|

||||

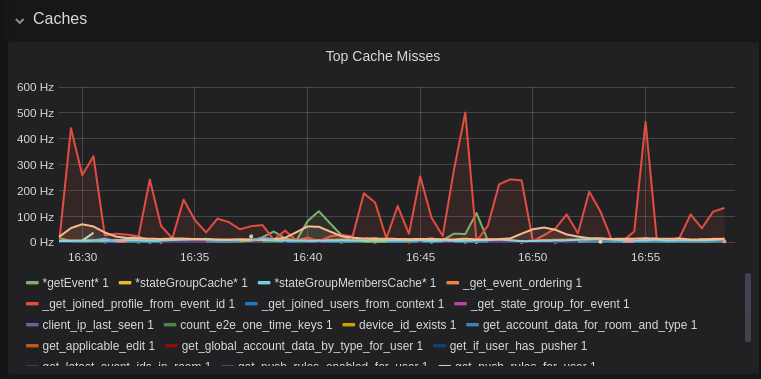

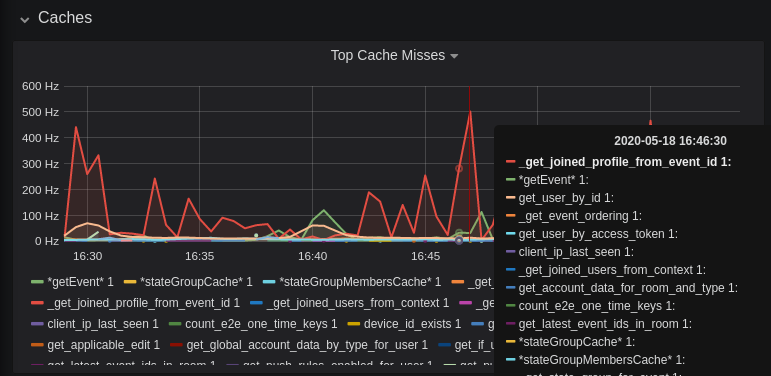

## Caches

|

||||

|

||||

|

||||

|

||||

|

||||

|

||||

This is quite a useful graph. It shows how many times Synapse attempts to retrieve a piece of data from a cache which the cache did not contain, thus resulting in a call to the database. We can see here that the `_get_joined_profile_from_event_id` cache is being requested a lot, and often the data we're after is not cached.

|

||||

|

||||

Cross-referencing this with the Eviction Rate graph, which shows that entries are being evicted from `_get_joined_profile_from_event_id` quite often:

|

||||

|

||||

|

||||

|

||||

we should probably consider raising the size of that cache by raising its cache factor (a multiplier value for the size of an individual cache). Information on doing so is available [here](https://github.com/matrix-org/synapse/blob/ee421e524478c1ad8d43741c27379499c2f6135c/docs/sample_config.yaml#L608-L642) (note that the configuration of individual cache factors through the configuration file is available in Synapse v1.14.0+, whereas doing so through environment variables has been supported for a very long time). Note that this will increase Synapse's overall memory usage.

|

||||

|

||||

## Forward Extremities

|

||||

|

||||

|

||||

|

||||

Forward extremities are the leaf events at the end of a DAG in a room, aka events that have no children. The more that exist in a room, the more [state resolution](https://spec.matrix.org/v1.1/server-server-api/#room-state-resolution) that Synapse needs to perform (hint: it's an expensive operation). While Synapse has code to prevent too many of these existing at one time in a room, bugs can sometimes make them crop up again.

|

||||

|

||||

If a room has >10 forward extremities, it's worth checking which room is the culprit and potentially removing them using the SQL queries mentioned in [#1760](https://github.com/matrix-org/synapse/issues/1760).

|

||||

|

||||

## Garbage Collection

|

||||

|

||||

|

||||

|

||||

Large spikes in garbage collection times (bigger than shown here, I'm talking in the

|

||||

multiple seconds range), can cause lots of problems in Synapse performance. It's more an

|

||||

indicator of problems, and a symptom of other problems though, so check other graphs for what might be causing it.

|

||||

|

||||

## Final Thoughts

|

||||

|

||||

If you're still having performance problems with your Synapse instance and you've

|

||||

tried everything you can, it may just be a lack of system resources. Consider adding

|

||||

more CPU and RAM, and make use of [worker mode](../../workers.md)

|

||||

to make use of multiple CPU cores / multiple machines for your homeserver.

|

||||

|

||||

@ -1,156 +0,0 @@

|

||||

## Some useful SQL queries for Synapse Admins

|

||||

|

||||

## Size of full matrix db

|

||||

`SELECT pg_size_pretty( pg_database_size( 'matrix' ) );`

|

||||

### Result example:

|

||||

```

|

||||

pg_size_pretty

|

||||

----------------

|