| images | ||

| pages | ||

| readme.md | ||

Adversarial ML Threat Matrix - Table of Contents

- Adversarial ML 101

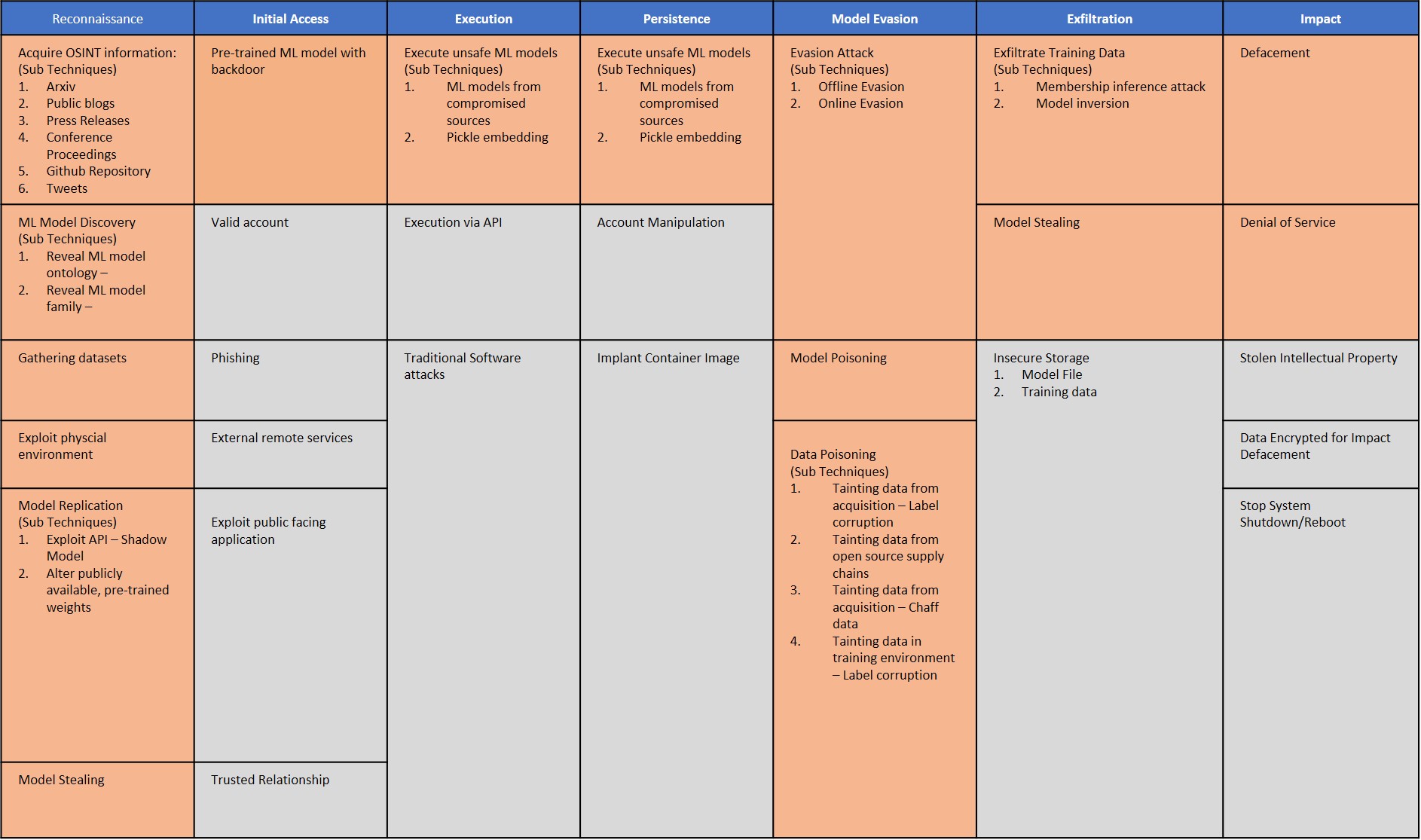

- Adversarial ML Threat Matrix

- Case Studies

- Contributors

- Feedback and Getting Involved

- Contact Us

The goal of this project is to position attacks on machine learning (ML) systems in an ATT&CK-style framework so that security analysts can orient themselves to these new and upcoming threats.

If you are new to how ML systems can be attacked, we suggest starting at this no-frills Adversarial ML 101 aimed at security analysts.

Or if you want to dive right in, head to Adversarial ML Threat Matrix.

Why Develop an Adversarial ML Threat Matrix?

- In the last three years, major companies such as Google, Amazon, Microsoft, and Tesla, have had their ML systems tricked, evaded, or misled.

- This trend is only set to rise: According to a Gartner report. 30% of cyberattacks by 2022 will involve data poisoning, model theft or adversarial examples.

- Industry is underprepared. In a survey of 28 organizations spanning small as well as large organizations, 25 organizations did not know how to secure their ML systems.

Unlike traditional cybersecurity vulnerabilities that are tied to specific software and hardware systems, adversarial ML vulnerabilities are enabled by inherent limitations underlying ML algorithms. Data can be weaponized in new ways which requires an extension of how we model cyber adversary behavior, to reflect emerging threat vectors and the rapidly evolving adversarial machine learning attack lifecycle.

This threat matrix came out of partnership with 12 industry and academic research groups with the goal of empowering security analysts to orient themselves to these new and upcoming threats. The framework is seeded with a curated set of vulnerabilities and adversary behaviors that Microsoft and MITRE have vetted to be effective against production ML systems. We used ATT&CK as a template since security analysts are already familiar with using this type of matrix.

We recommend digging into Adversarial ML Threat Matrix.

To see the Matrix in action, we recommend seeing the curated case studies

- ClearviewAI Misconfiguration

- GPT-2 Model Replication

- ProofPoint Evasion

- Tay Poisoning

- Microsoft - Azure Service - Evasion

- Microsoft Edge AI - Evasion

- MITRE - Physical Adversarial Attack on Face Identification

Contributors

| Organization | Contributors |

|---|---|

| Microsoft | Ram Shankar Siva Kumar, Hyrum Anderson, Suzy Schapperle, Blake Strom, Madeline Carmichael, Matt Swann, Mark Russinovich, Nick Beede, Kathy Vu, Andi Comissioneru, Sharon Xia, Mario Goertzel, Jeffrey Snover, Derek Adam, Deepak Manohar, Bhairav Mehta, Peter Waxman, Abhishek Gupta, Ann Johnson, Andrew Paverd, Pete Bryan, Roberto Rodriguez, Will Pearce |

| MITRE | Mikel Rodriguez, Christina Liaghati, Keith Manville, Michael Krumdick, Josh Harguess, Virginia Adams, Shiri Bendelac, Henry Conklin, Poomathi Duraisamy, David Giangrave, Emily Holt, Kyle Jackson, Nicole Lape, Sara Leary, Eliza Mace, Christopher Mobley, Savanna Smith, James Tanis, Michael Threet, David Willmes, Lily Wong |

| Bosch | Manojkumar Parmar |

| IBM | Pin-Yu Chen |

| NVIDIA | David Reber Jr., Keith Kozo, Christopher Cottrell, Daniel Rohrer |

| Airbus | Adam Wedgbury |

| PricewaterhouseCoopers | Michael Montecillo |

| Deep Instinct | Nadav Maman, Shimon Noam Oren, Ishai Rosenberg |

| Two Six Labs | David Slater |

| University of Toronto | Adelin Travers, Jonas Guan, Nicolas Papernot |

| Cardiff University | Pete Burnap |

| Software Engineering Institute/Carnegie Mellon University | Nathan M. VanHoudnos |

| Berryville Institute of Machine Learning | Gary McGraw, Harold Figueroa, Victor Shepardson, Richie Bonett |

Feedback and Getting Involved

The Adversarial ML Threat Matrix is a first-cut attempt at collating a knowledge base of how ML systems can be attacked. We need your help to make it holistic and fill in the missing gaps!

Corrections and Improvement

- For immediate corrections, please submit a Pull Request with suggested changes! We are excited to make this system better with you!

- For a more hands on feedback session, we are partnering with Defcon's AI Village to open up the framework to all community members to get feedback and make it better. Current thinking is to have this workshop circa Jan/Feb 2021. Please register here.

Contribute Case Studies

We are especially excited for new case-studies! We look forward to contributions from both industry and academic researchers. Before submitting a case-study, consider that the attack:

- Exploits one or more vulnerabilities that compromises the confidentiality, integrity or availability of ML system.

- The attack was against a production, commercial ML system. This can be on MLaaS like Amazon, Microsoft Azure, Google Cloud AI, IBM Watson etc or ML systems embedded in client/edge.

- You have permission to share the information/published this research. Please follow the proper channels before reporting a new attack and make sure you are practicing responsible disclosure.

You can email advmlthreatmatrix-core@googlegroups.com with summary of the incident and Adversarial ML Threat Matrix mapping.

Join our Mailing List

- For discussions around Adversarial ML Threat Matrix, we invite everyone to join our Google Group here.

- Note: Google Groups generally defaults to your personal email. If you would rather access this forum using your corporate email (as opposed to your gmail), you can create a Google account using your corporate email before joining the group.

- Also most email clients route emails from Google Groups into "Other"/"Spam"/"Forums" folder. So, you may want to create a rule in your email client to have these emails go into your inbox instead.

Contact Us

For corrections and improvement or to contribute a case study, see Feedback.

-

For general questions/comments/discussion, our public email group is advmlthreatmatrix-core@googlegroups.com. This emails all the members of the distribution group.

-

For private comments/discussions and how organizations can get involved in the effort, please email: Ram.Shankar@microsoft.com and Mikel@mitre.org.